Computer vision, a combination of different intelligence fields, allows computers to understand and comprehend data. As with sight, it enables computers to analyze and make choices based on information. The growth of videos and imagery, along with technological advances in machine learning and computing abilities, has helped propel Computer Vision Solutions into a dominant technological advancement. Computer vision models are highly sophisticated, with various uses, including improving businesses’ efficiency, automating vital decision systems, and more. However, a promising model can become costly if it fails to function. That’s why how we create and use computer vision models is important! Machine learning experts are gradually adopting DevOps techniques for their model deployment systems. However, it’s not over there! There are various aspects, such as code versioning and deployment environments, continual modeling training and retraining and quality, data drift models, hyperparameters, and features. Some of these methods apply to machine learning models. Machine learning and computing power continue to grow incredibly, propelling computer visuals onto the scene as an indispensable business tool used for numerous purposes, from automating quality control in production lines to improving customers’ experiences at retail stores. This means that whether it’s improving the workflow or opening new opportunities for customer engagement, Computer vision can revolutionize how companies operate and thrive in the next decade. We’ll dive into the depths to learn more about the computer vision application and their architecture.

What Is Computer Vision?

It can be defined as AI that assists computers in “seeing” images from their environment in a way similar to what humans can. Before computers, they were unable to recognize simple forms or even text. Artificial neural networks and profound learning developments have advanced AI Computer Vision Software Development. Like other kinds of AI, computer vision also attempts to automatize and perform jobs based on human capability. For this, computer vision tries to duplicate the human eye and the method humans use to comprehend the images they perceive. The wide range of possible computer vision applications is integral to many innovative and modern solutions. Computer vision can be operated via the cloud or at the premises.

How Does Computer Vision Work?

Computer vision is a complex process that requires lots of information. It analyzes data repeatedly until it can discern differences and then recognize pictures. To train a computer to recognize tires on cars, an abundance of tire images must be fed into it to identify differences among tires—particularly ones without imperfections—to remember those that need replacements. Two key technologies are employed for this purpose: machine learning, which is called deep learning, and convolutional neural networks (CNN). Machine learning employs algorithmic models, allowing computers to educate themselves on the visual context. When enough information is passed into the model, it can “look” at the data and learn to distinguish different images. These algorithms let the computer understand itself instead of having someone program it to detect the image. A CNN assists a machine-learning or deep-learning model “look” by breaking images into tiny pixels assigned labels or tags. The labeling process uses these tags to make convolutions (a mathematical operation that combines two different functions that result in another function). Then, it makes predictions on the image it’s “seeing.” The neural network executes convolutions and then checks whether it is accurate in its forecasts during repeated iterations until its predictions are realized. Then, it can recognize or see images similar to human eyes. Like a human processing the image from a distance, CNN begins by identifying hard edges and basic shapes. It then adds details as it makes predictions through its iterations. It is a CNN that can be used to comprehend a single image. Recurrent neural networks (RNN) can be used similarly to video software to assist computer systems in understanding how images within a sequence of frames are linked.

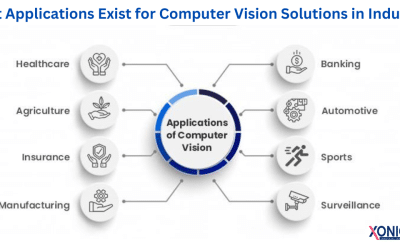

Understanding Computer Vision Applications

Computer Vision is focused on making machines able to comprehend and act on images. This has been an essential element of innovation in different sectors. From retail to healthcare, Computer vision fundamentally changes conventional business models, increasing effectiveness, improving customer experiences, and generating new income streams. After a solid understanding of the foundations, Let’s look at various methods, from the simplest algorithms to advanced machine learning structures. Pay attention to these notable examples:

- Facial recognition algorithms are part of mobile photography applications, which automate content curation and allow for accurate social media tagging.

- The algorithms for detecting road delimitations built into autonomous vehicles that run at high speeds guarantee secure and precise navigation.

- Engine optical text recognition engines enable applications designed to handle visual inquiries to read text patterns within images.

Although these applications offer a range of capabilities, they’re all bound to a common thread: they use unprocessed and oft-disorganized visual inputs to create structured, easily understood information. The transformation increases the benefits provided to the end-user in transforming what might look like ambiguous information in the visual world into helpful information across multiple applications.

Applications Of Computer Vision

We’ll now take an overview of the main application of computer vision.

Autonomous Vehicles And Computer Vision

Computer vision is the basis for interpreting the situation in autonomous vehicles. High-definition cameras can provide multiple angles, which sophisticated algorithms can ingest in real-time. The framework’s computational capabilities identify roadsides, read traffic signals, and identify the presence of other objects, such as vehicles, pedestrians, and objects. The autonomic system processes this information, allowing cars to maneuver through challenging road conditions and surfaces, improving security and efficiency.

Facial Identification Through Computer Vision

Computer vision is a significant contributor to facial recognition technology. It improves the security of devices and enhances functionality and apps. Specific algorithms analyze facial characteristics inside an image and link them up with sizable personal databases of the face. For example, electronic devices use these strategies to ensure user security, as social media platforms employ the same techniques for user identification and tags. Furthermore, police applications use advanced versions of algorithms to locate suspects and people of interest from several streams of video.

Augmented And Mixed Realities

Computer vision is integral to augmented and mixed reality technologies, especially recognizing objects within real environments. The algorithms can identify real-world planes, such as floors and walls, which are crucial to establishing dimensions and depth. The data is then used to precisely overlay virtual objects onto the real world perceived by devices such as tablets, smartphones, and smart glasses.

Healthcare: A New Frontier For Computer Vision

In healthcare technology, computer vision techniques provide an excellent opportunity to automate medical procedures. In particular, machine-assisted diagnosis can detect cancerous changes in skin images or spot anomalies within scans using X-rays and MRI scans. This kind of automation improves the accuracy of diagnostics and drastically reduces the time and effort required for medical diagnosis. The multi-vertical use of Custom Computer Vision Development Services, supported by the latest computational technology, is a step forward in technology and paradigm change. Its potential is immense, and the current implementations could represent the tip of the iceberg.

Workings Of Computer Vision Applications

Within Neuroscience in Neuroscience and Machine Learning, one of the biggest challenges is understanding the computation mechanics that comprise the brain. While Neural Networks claim to simulate these mechanisms, no scientific hypothesis supports this claim. The complexity extends to computer vision, which lacks an established standard for comparing its algorithms with the human brain’s ability to process images. In the simplest sense, computer vision focuses on identifying patterns in images. It is expected to input an extensive set of labeled images to train an algorithm in this area. Images are processed using a variety of specialized algorithms that will identify various attributes, such as colors and shapes, as well as the spatial relations among these forms. For example, imagine the possibility of training your system using images of cats. The algorithm analyzes each photograph, looking for key characteristics like colors and shapes and how these shapes are related across space. This allows computers to create a composite “cat profile,” which is then used to find cats in new pictures that have not been labeled. To understand some particulars of technology, imagine how a grayscale picture, such as a photograph of Abraham Lincoln, is processed. In this form, every pixel’s brightness is coded in eight-bit numbers, ranging from zero (black) to 255 (white). Computers can effectively read and interpret images by changing the visual components to numerical data. Computer vision systems can extend their capabilities beyond pattern recognition to more sophisticated but highly efficient interpretive mechanisms for visual data.

Computer Vision Application Development Lifecycles

It’s crucial to start by analyzing the application’s prerequisites. What is the purpose? What are they looking to achieve? What budget are you working on? It is also essential to determine what could and couldn’t be achieved using machine learning. Can you use machine intelligence’s capabilities to perform the tasks you would like it to do? Also important is to decide the source of the data for input.

Prototyping Your Model

The application has many possible uses; however, choosing a particular application for prototyping is generally helpful. It is important to note that prototyping may result in additional stress, such as, for instance, an identical collection of images that might have to be labeled several times. First for prototyping purposes and then for constructing an application that can be used in production. Planning in the beginning is a way to prevent this unnecessary step. However, there are times when data is changed during manufacturing, and therefore, rework may be necessary. It’s a best practice to prototype. Working on something, even if likely using less-than-perfect capabilities, is extremely valuable when determining various requirements and uncovering blind spots. Even the most proficient developers won’t complete the task on the first attempt. After identifying a specific use case, we must determine the software’s input and output requirements. For example, we might be looking to determine the number of people in line at any period in a shop or need to determine what items are bought from each client. However, it is suggested that you test these scenarios individually, from beginning to end, and then improve. You may require different amounts of data, model training, and testing in each scenario once you’ve established the problem in the first place and have gathered the information. In the retail store scenario, there is a need to track and identify individuals in the queue and determine the logic of your application depending on the possibility of being within the queue. It’s essential to keep data excessive during the initial prototyping stage manageable. If you have a trained model that can detect people, then use it! It is necessary to determine the need for collecting data and building a database to solve your issue. Gather information from the deployment system if you’re lucky enough to have an annotated data set or an operational model to solve your problem. There is a chance that your model doesn’t work as expected because of the camera angle or lighting issues, for example. If you modify the parameters of your model, for example, ones that define the size and scale of the objects to be observed, you could change the model to suit your specific application. It is possible to test various models, too. It is possible to save some energy and time if you can skip the data-creation portion of the work.

Data Collection And Annotation

If you find it essential to collect the data, you must note the information for a couple of photos. You don’t need to mention many data points since you would need to redo the work. It’s by far the biggest hurdle in the process. It is highly recommended that you conduct this internally for the prototype phase as it helps you better understand the computer vision issue and makes you reconsider the annotation strategy in light of the many problems that could arise in your application. For example, if we revisit our previous retail scenario, If several people are waiting in line, and 3 of them are within a group (say an adult mom and two children), How can your system deal with it? How do you manage the situation if a person enters the line and later goes away? If you see a realistic photo of a player from a game displayed on a wall in the field of view of your system (which can be identified as a person), What’s the best way to manage this? After completing your annotation strategy, you’ll feel at ease when moving into the next phase of the procedure.

Testing

Suppose you find yourself addressing and accounting for these edge cases in a real-world environment. In that case, it is time to begin evaluating the models and the system using the footage feed of the actual deployment. It’s crucial to comprehend the precision targets before moving to the next step, for example, knowing whether data or the model limits the accuracy. If the accuracy is data-limited, adding more data won’t aid, and you’ll need to examine alternative methods. Like any other application test, you will discover specific results that are not expected. It is essential to ensure that your team members are flexible when finding solutions for problems as they occur. In any project involving software, the ability to access tools for debugging is essential. Even though debugging tools to aid in modeling are widely available and utilized, the necessity of instruments that allow developers to rapidly identify and separate failures in the system application they are deploying is not widely appreciated. Think about the situation where the application’s performance depends upon a mix of computers, model tracking, and other post-processing and preprocessing algorithmic elements. If you implement an intricate system and you get a failed situation, it may be challenging to identify the system that is the cause of the failure. It is vital to design and implement system-level debugging techniques that can be utilized for testing, which are understood by the entire AI Computer Vision Software Development Company team. It is similar to the tests for integrating systems carried out during traditional software development.

Deployment, Optimizations, And Maintenance

As with conventional software development, computer vision software includes deployment, maintenance, and optimizing stages. When the software is first deployed, looking at all failures and costs is crucial. In particular, the price of removing some detections and getting a handful of incorrect detections is different. A different aspect to consider is the number of frames per second (FPS) that can be achieved using the actual system. In certain situations, the detection time and FPS quality criterion might not be achieved when processing time is different and if computational resources are distributed among several applications. This information can help to optimize the performance of your application. Maintenance usually involves incorporating additional objects to detect or add information from failure scenarios.

Final Thoughts

Building and deploying computer vision applications requires an in-depth knowledge of all the variables that could impede their effectiveness. By tackling these issues, developers can improve their model’s accuracy, efficiency, and generalization abilities. Data processing and augmentation are vital for superior performance in computer vision-related tasks. Strategies like dealing with varying aspect ratios, domain-specific preprocessing, and data enhancement assist in increasing generalization and robustness. Acquiring a variety of datasets whenever feasible is crucial to capture real-world scenarios more accurately. Selecting the appropriate model structure is another vital factor to consider. The procedure of creating computer vision software is a clear example of how to build AI technology. Each stage requires concentration, innovation, and moral analysis, from the initial concept stage to the deployment phase. In the future, embracing learning, adaptability, and a firm determination to uphold values will determine the future of computer vision, providing new opportunities for transformation in all aspects of human endeavor.